龙虾(ClawBot/OpenClaw)很火,作为一个24小时待命在后台工作的助手Agent,它的能力边界超过了很多其他的助手,为了不让其破坏电脑环境,大部分人不得不将其部署在单独的独立环境中,又因为它得24小时工作,所以要么部署在云端,要么部署在macmini或者更加安静省电的机器上。赶个时髦,玩了一下部署,本文记录主要部署和接通飞书的过程。

感谢哇塞提供的服务器。但是最终还是使用自己的服务器完成的部署,希望OpenClaw不会破坏我的服务器。

部署

常规情况下我们可以使用如下命令使用安装脚本直接安装。

curl -fsSL https://openclaw.ai/install.sh | bash但是哇塞的机器的curl版本太过老旧,且使用的是CentOS,我并不太了解。故改为npm安装。

🦞 OpenClaw Installer

Because Threads wasn't the answer either.

✓ Detected: linux

Install plan

OS: linux

Install method: npm

Requested version: latest

[1/3] Preparing environment

· Node.js not found, installing it now

· Installing Node.js via NodeSource

· Installing Linux build tools (make/g++/cmake/python3)

✓ Build tools installed

curl: option --retry-connrefused: is unknown首先安装npm环境,也就是node,如下:

# 首先安装NVM,用来管理node环境,且不用被系统的老旧版本牵制

curl -o- https://raw.githubusercontent.com/nvm-sh/nvm/v0.40.4/install.sh | bash

# 然后安装一个稳定版本

nvm install v22安装完成后使用node -v检查发现node所依赖的运行环境不存在(依然是CentOS系统老旧问题)。

改为Snap安装node。将以下命令保存为snap.sh并执行:

# 安装 EPEL 仓库并切换到归档源

sudo yum install -y https://archives.fedoraproject.org/pub/archive/epel/7/x86_64/Packages/e/epel-release-7-14.noarch.rpm

sudo sed -i 's/^mirrorlist/#mirrorlist/g' /etc/yum.repos.d/epel.repo

sudo sed -i 's|#baseurl=http://download.fedoraproject.org/pub/epel|baseurl=http://archives.fedoraproject.org/pub/archive/epel|g' /etc/yum.repos.d/epel.repo

# 安装 snapd

sudo yum install -y snapd

sudo systemctl enable --now snapd.socket

sudo ln -s /var/lib/snapd/snap /snap安装node:

sudo snap install node --channel=22/stable --classic添加环境变量:

echo 'export PATH=$PATH:/snap/bin' >> ~/.bashrc

source ~/.bashrc发现依然有依赖问题:

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libstdc++.so.6: version `GLIBCXX_3.4.30' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libstdc++.so.6: version `GLIBCXX_3.4.21' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libstdc++.so.6: version `GLIBCXX_3.4.20' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libstdc++.so.6: version `CXXABI_1.3.9' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libstdc++.so.6: version `GLIBCXX_3.4.26' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libm.so.6: version `GLIBC_2.27' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libm.so.6: version `GLIBC_2.29' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libc.so.6: version `GLIBC_2.33' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libc.so.6: version `GLIBC_2.34' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libc.so.6: version `GLIBC_2.27' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libc.so.6: version `GLIBC_2.32' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libc.so.6: version `GLIBC_2.28' not found (required by /var/lib/snapd/snap/node/11315/bin/node)

/var/lib/snapd/snap/node/11315/bin/node: /lib64/libc.so.6: version `GLIBC_2.25' not found (required by /var/lib/snapd/snap/node/11315/bin/node)classic模式安装依然会依赖系统库,但是我们无法使用strict,因为node本身必然要访问系统资源的。

正式部署

那么,我们就只剩下更换或者升级操作系统这一条路了。最终又回到了使用安装脚本直接安装的老路。

curl -fsSL https://openclaw.ai/install.sh | bash这个脚本会帮我们自动安装node环境与OpenClaw,但是可能会卡住,我们自己手动执行npm install -g opeclaw@xxxx可以看到错误信息:

m error ^[[?25hNot searching for unused variables given on the command line.

npm error -- Configuring incomplete, errors occurred!

npm error Not searching for unused variables given on the command line.

npm error -- Configuring incomplete, errors occurred!

npm error [node-llama-cpp] The prebuilt binary for platform "linux" "x64" is not compatible with the current system, falling back to building from source

npm error ^[[?25l^[[?25hCMake Error at CMakeLists.txt:1 (cmake_minimum_required):

npm error CMake 3.19 or higher is required. You are running version 3.16.3

npm error

npm error

npm error CMake Error at CMakeLists.txt:1 (cmake_minimum_required):

npm error CMake 3.19 or higher is required. You are running version 3.16.3

npm error

npm error

npm error ERROR OMG Process terminated: 1

npm error [node-llama-cpp] Failed to build llama.cpp with no GPU support. Error: SpawnError: Command npm run -s cmake-js-llama -- compile --log-level warn --config Release --arch=x64 --out localBuilds/linux-x64 --runtime-versio

n=22.22.1 --parallel=4 --CDGGML_BUILD_NUMBER=1 --CDCMAKE_CONFIGURATION_TYPES=Release --CDNLC_CURRENT_PLATFORM=linux-x64 --CDNLC_TARGET_PLATFORM=linux-x64 --CDNLC_VARIANT=b8121 --CDGGML_METAL=OFF --CDGGML_CCACHE=OFF --CDLLAMA_CU

RL=OFF --CDLLAMA_HTTPLIB=OFF --CDLLAMA_BUILD_BORINGSSL=OFF --CDLLAMA_OPENSSL=OFF exited with code 1

npm error at createError (file:///usr/lib/node_modules/openclaw/node_modules/node-llama-cpp/dist/utils/spawnCommand.js:34:20)

npm error at ChildProcess. (file:///usr/lib/node_modules/openclaw/node_modules/node-llama-cpp/dist/utils/spawnCommand.js:47:24)

npm error at ChildProcess.emit (node:events:519:28)

npm error at ChildProcess._handle.onexit (node:internal/child_process:293:12)

npm error SpawnError: Command npm run -s cmake-js-llama -- compile --log-level warn --config Release --arch=x64 --out localBuilds/linux-x64 --runtime-version=22.22.1 --parallel=4 --CDGGML_BUILD_NUMBER=1 --CDCMAKE_CONFIGURATION_

TYPES=Release --CDNLC_CURRENT_PLATFORM=linux-x64 --CDNLC_TARGET_PLATFORM=linux-x64 --CDNLC_VARIANT=b8121 --CDGGML_METAL=OFF --CDGGML_CCACHE=OFF --CDLLAMA_CURL=OFF --CDLLAMA_HTTPLIB=OFF --CDLLAMA_BUILD_BORINGSSL=OFF --CDLLAMA_OP

ENSSL=OFF exited with code 1

npm error at createError (file:///usr/lib/node_modules/openclaw/node_modules/node-llama-cpp/dist/utils/spawnCommand.js:34:20)

npm error at ChildProcess. (file:///usr/lib/node_modules/openclaw/node_modules/node-llama-cpp/dist/utils/spawnCommand.js:47:24)

npm error at ChildProcess.emit (node:events:519:28)

npm error at ChildProcess._handle.onexit (node:internal/child_process:293:12)

npm error A complete log of this run can be found in: /root/.npm/_logs/2026-03-07T11_53_48_277Z-debug-0.log

root@toy:~# cmake --version

cmake version 3.16.3

CMake suite maintained and supported by Kitware (kitware.com/cmake).Ubuntu默认安装的CMake版本过低,需要升级,使用以下脚本:

# 移除旧版本(如果有)

sudo apt remove --purge cmake

# 安装必要的依赖

sudo apt update

sudo apt install -y gpg wget

# 添加 Kitware 签名密钥

wget -O - https://apt.kitware.com/keys/kitware-archive-latest.asc 2>/dev/null | gpg --dearmor - | sudo tee /usr/share/keyrings/kitware-archive-keyring.gpg >/dev/null

# 添加 Kitware 仓库(根据你的 Ubuntu 版本选择对应的代号,例如 focal 20.04, jammy 22.04, noble 24.04)

echo 'deb [signed-by=/usr/share/keyrings/kitware-archive-keyring.gpg] https://apt.kitware.com/ubuntu/ focal main' | sudo tee /etc/apt/sources.list.d/kitware.list

sudo apt update

# 安装 cmake

sudo apt install cmake此时重新执行curl -fsSL https://openclaw.ai/install.sh | bash 便可以完成安装可以开始初始化了。

[1/3] Preparing environment

✓ Node.js v22.22.1 found

· Active Node.js: v22.22.1 (/usr/bin/node)

· Active npm: 10.9.4 (/usr/bin/npm)

[2/3] Installing OpenClaw

✓ Git already installed

· Installing OpenClaw v2026.3.2

✓ OpenClaw npm package installed

✓ OpenClaw installed

[3/3] Finalizing setup

· Running doctor to migrate settings

✓ Doctor complete初始化向导,一路yes就行,核心是配置模型和接入飞书。其实际上就是生成的修改配置文件.openclaw/openclaw.json ,并启动服务:

{

// 上面省略一大堆

"models": {

"mode": "merge",

"providers": {

"custom-api-deepseek-com": {

"baseUrl": "https://api.deepseek.com",

"apiKey": "自己的key",

"api": "openai-completions",

"models": [

{

"id": "deepseek-reasoner",

"name": "deepseek-reasoner (Custom Provider)",

"reasoning": false,

"input": [

"text"

],

"cost": {

"input": 0,

"output": 0,

"cacheRead": 0,

"cacheWrite": 0

},

"contextWindow": 16000,

"maxTokens": 4096

}

]

}

}

},

// 中间省略一大堆

"channels": {

"feishu": {

"enabled": true,

"appId": "自己的key",

"appSecret": "自己的secret",

"connectionMode": "websocket",

"domain": "feishu",

"groupPolicy": "allowlist",

"groupAllowFrom": [

"oc_18c84e60ae9df0bc2db30c9444d97041"

]

}

},

// 下面省略一大堆

}这里只是展示了核心部分:模型配置与接入飞书。模型配置由于在openclaw onboard初始化设置中没有看到deepseek,这里使用的是自定义模式,然后使用OpenAI的兼容API。

我们可以使用openclaw status查看当前状态。建议防火墙拦截dashboard端口18789,避免不必要的麻烦。

如果需要更改配置可以使用openclaw configure。(梦回远古时候的管理方式)

接入飞书

上面我们已经部署完成了,完成之后一般会使用TUI测试一下是否接入了大模型之类的。但是我们肯定不希望使用命令行来操作,那就和Claude Code一样了。我们想要的是一个24小时待命并且会在后台完成工作的助手。

我们要将其接入聊天软件。我这里是接入飞书。

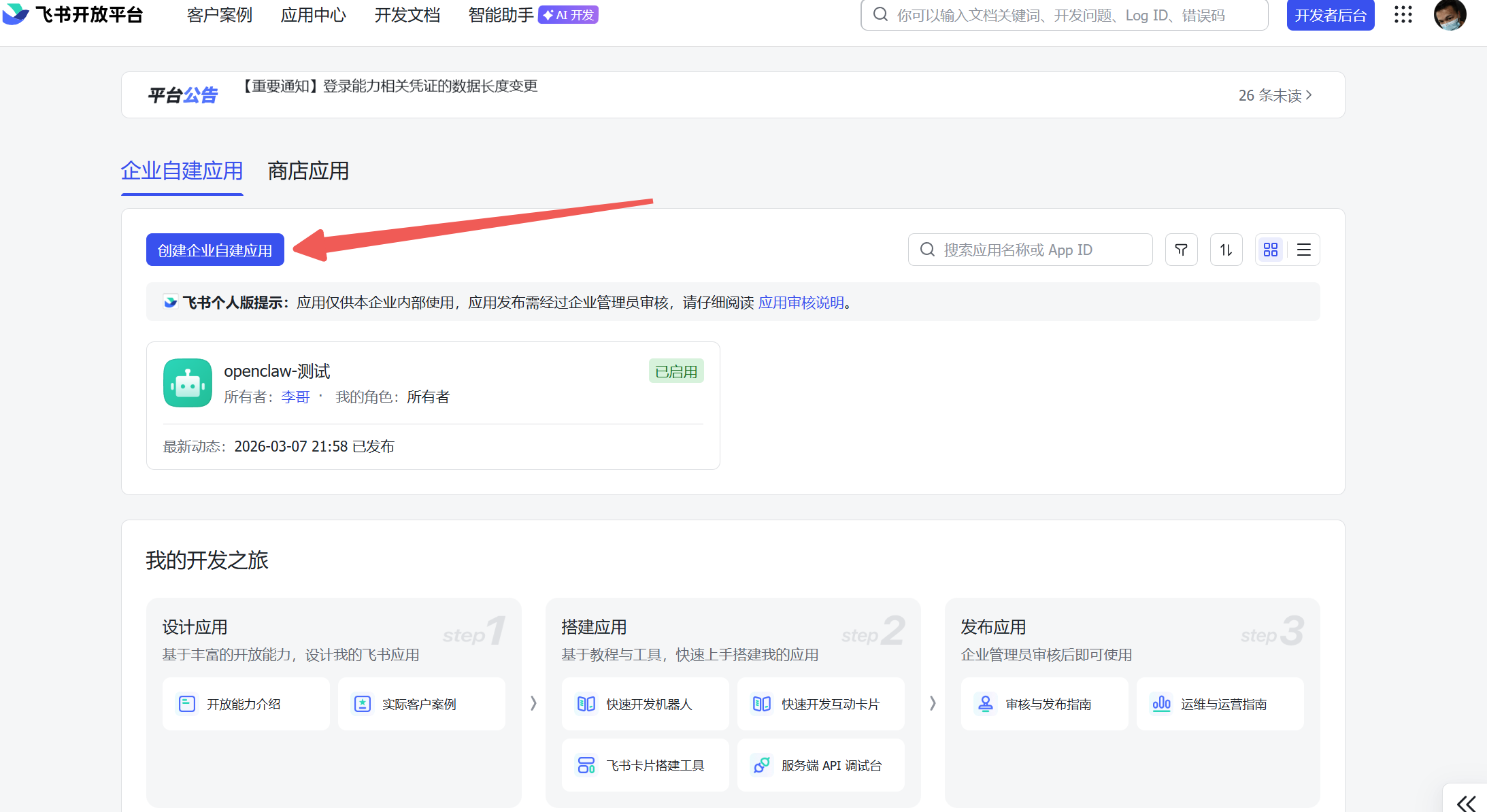

首先我们登录飞书开放平台创建一个应用机器人:创建企业自建应用。

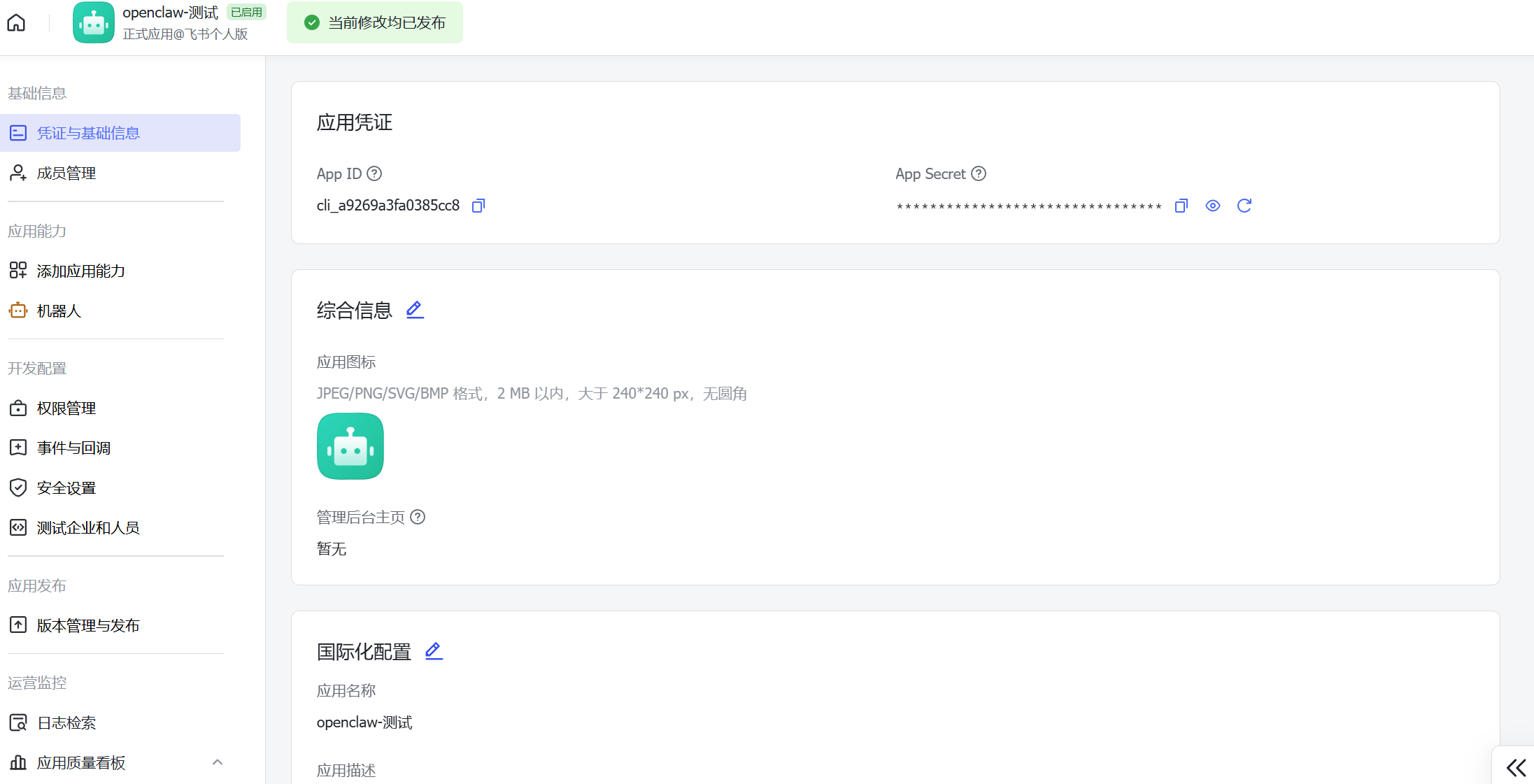

将APP_ID和APP_SECRET复制出来,配置到上面部署阶段会用到的地方。

添加机器人能力

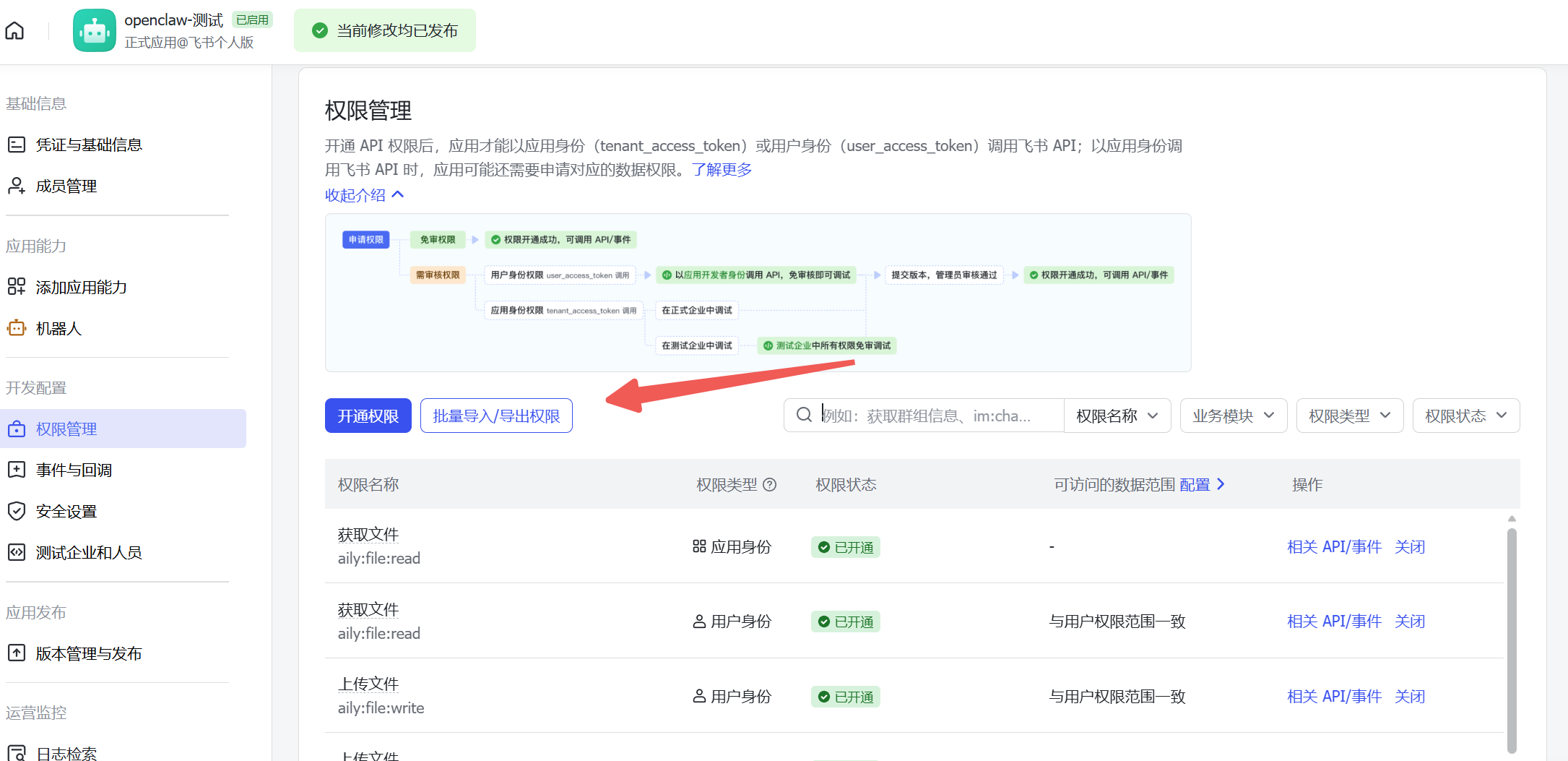

导入权限

{

"scopes": {

"tenant": [

"aily:file:read",

"aily:file:write",

"application:application.app_message_stats.overview:readonly",

"application:application:self_manage",

"application:bot.menu:write",

"cardkit:card:write",

"contact:user.employee_id:readonly",

"corehr:file:download",

"docs:document.content:read",

"event:ip_list",

"im:chat",

"im:chat.access_event.bot_p2p_chat:read",

"im:chat.members:bot_access",

"im:message",

"im:message.group_at_msg:readonly",

"im:message.group_msg",

"im:message.p2p_msg:readonly",

"im:message:readonly",

"im:message:send_as_bot",

"im:resource",

"sheets:spreadsheet",

"wiki:wiki:readonly"

],

"user": ["aily:file:read", "aily:file:write", "im:chat.access_event.bot_p2p_chat:read"]

}

}

配置事件与回调,这里使用长websocket长连接添加接收消息事件与第一次会话事件:

最后创建版本然后发布就可以测试了

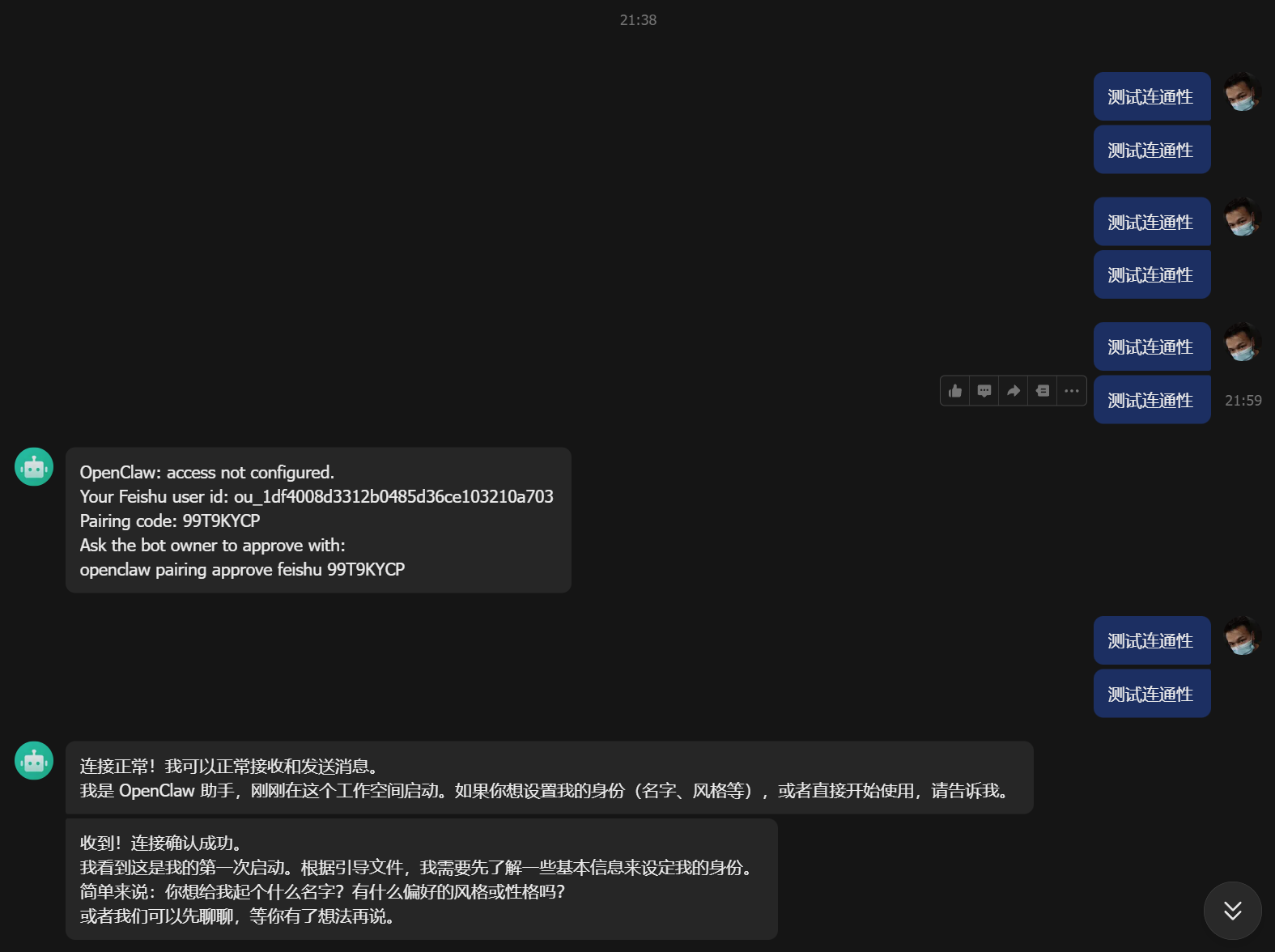

配对打通

首次会话需要配对之后才能继续操作,如下:

需要执行 openclaw pairing approve feishu 99T9KYCP 完成配对完成才算打通了。

TO DO

那么环境我们已经有了,接下来就是需要给OpenClaw安装各式各样的Skills和MCP来扩展其能力边界了。这就是后话了。